The narrative around AI agents has largely focused on capabilities and use cases. But analyzing recent industry data reveals a more nuanced story: company size isn’t just a demographic detail — it’s the key to understanding how AI agents are truly being integrated into business operations.

The Scale-Control Paradox

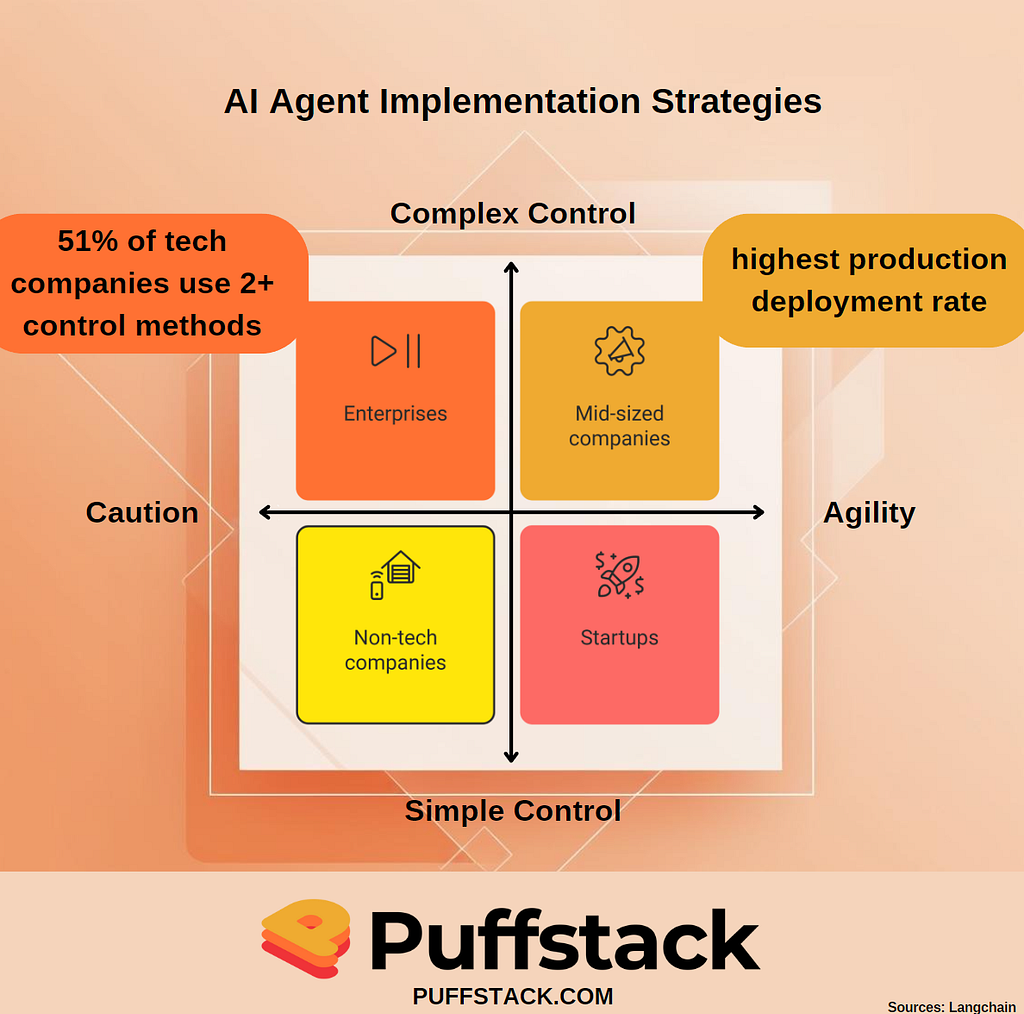

Here’s what’s fascinating: while 51% of companies have AI agents in production, the implementation approaches reveal a stark divide. Enterprises (2000+ employees) overwhelmingly favor read-only permissions and multiple control layers, while startups (<100 employees) prioritize tracing and rapid deployment. This isn’t just about risk tolerance — it’s about fundamental differences in how organizations view AI agent integration.

The real insight? The most successful implementations aren’t coming from either extreme. Mid-sized companies (100–2000 employees) are seeing the highest production deployment rates at 63%. Why? They’ve struck the perfect balance between enterprise caution and startup agility.

Quality Vs Speed

Performance quality stands out as the top concern across all company sizes, but here’s where it gets interesting: smaller companies cite it at nearly double the rate of other concerns (45.8% vs 22.4% for cost). This isn’t just about maintaining standards — it reveals a fundamental shift in how we think about AI deployment.

Traditional technology adoption usually follows a cost-first consideration model. But with AI agents, we’re seeing a quality-first paradigm emerge. This suggests that AI agents aren’t being treated as just another tool — they’re being viewed as core operational components from day one.

The Multi-Control Advantage

A particularly revealing pattern emerges in control strategies. Tech companies are 30% more likely to implement multiple control methods compared to non-tech companies (51% vs 39%). But here’s the counterintuitive part: this higher control complexity correlates with more successful deployments, not fewer.

This suggests that the key to successful AI agent implementation isn’t about choosing the right control method — it’s about building a layered approach that combines multiple strategies. Think of it as the “security in depth” principle applied to AI agent management.

Beyond Basic Automation

The most successful implementations in 2024 aren’t just automating tasks — they’re fundamentally changing how organizations handle decision-making processes. Here’s the breakdown:

- 58% use AI agents for research and summarization

- 53.5% for personal productivity enhancement

- 45.8% for customer service

But the real story isn’t in these numbers — it’s in how these use cases are evolving. Organizations are moving beyond simple task automation to what I call “decision augmentation” — using AI agents not just to complete tasks, but to enhance human decision-making capabilities.

The Control Evolution

The most sophisticated implementations show an emerging pattern: a shift from binary control (permitted vs. not permitted) to what I call “adaptive control frameworks.” These frameworks adjust control levels based on:

- Task complexity

- Historical performance

- Risk level

- User expertise

This represents a fundamental shift from the current dominant model of static permissions to a more nuanced, context-aware approach.

Looking Ahead

The next phase of AI agent adoption won’t be driven by technological capabilities alone. The data suggests we’re moving toward a model where successful implementation depends on:

- Adaptive control frameworks

- Multi-layered oversight systems

- Context-aware permission structures

- Integrated quality monitoring

The organizations that understand and adapt to these patterns will be best positioned to leverage AI agents effectively in the coming years.

This analysis is based on insights from LangChain’s comprehensive State of AI Agents 2024 survey of over 1,300 professionals across various industries and company sizes. The raw data and initial findings were published by LangChain, while the analysis and insights presented here are original interpretations of the data.